A new productivity tool looks efficient during the first week.

The interface feels cleaner.

The automation appears faster.

The feature list promises less chaos.

Then reality slowly surfaces.

Notifications multiply.

Workflows become fragmented.

Exports are limited.

The setup becomes harder to maintain than the old system.

Most people do not suffer from a lack of tools.

They suffer from poor tool decisions repeated over time.

That is why high-performing operational systems rely on evaluation frameworks before implementation.

A decision scorecard creates structure before commitment.

Instead of asking:

“Which tool looks better?”

You begin asking:

“Which system creates the lowest long-term operational friction?”

What Is a Decision Scorecard?

A decision scorecard is a structured evaluation framework used to compare tools, workflows, platforms, or operational systems using weighted criteria instead of emotional preference.

The purpose is not perfection.

The purpose is:

- reducing impulsive decisions

- improving consistency

- lowering switching risk

- increasing long-term execution reliability

A proper scorecard turns vague preferences into measurable trade-offs.

Why Most Tool Decisions Fail

Most productivity tool decisions are based on:

- aesthetics

- recommendations

- social proof

- temporary frustration

- feature excitement

Very few people evaluate:

- maintenance overhead

- migration difficulty

- workflow compatibility

- export independence

- operational complexity

That creates a predictable pattern.

| Initial Decision Trigger | Long-Term Result |

|---|---|

| “Everyone uses it” | Poor workflow fit |

| Too many features | Complexity overload |

| Cheap monthly pricing | Hidden long-term cost |

| Fast setup | Weak scalability |

| Impulsive switching | Fragmented execution |

This is why tool switching often feels productive while quietly reducing execution stability.

What a Good Decision Scorecard Actually Measures

A useful scorecard evaluates operational reality — not marketing claims.

The most important criteria usually include:

Ease of Daily Use

A tool that looks powerful but increases hesitation is operationally expensive.

Questions to evaluate:

- Can tasks be completed quickly?

- Does the interface reduce friction?

- Is the workflow intuitive under pressure?

Maintenance Overhead

Many systems become exhausting not because they fail — but because they require constant upkeep.

Evaluate:

- setup complexity

- recurring configuration

- workflow maintenance

- automation reliability

Integration Compatibility

Tools rarely operate alone.

The real question is:

how well does the tool fit the existing execution environment?

Evaluate:

- calendar compatibility

- note-system integration

- export flexibility

- automation support

Switching Cost

Most people underestimate migration cost.

Switching tools affects:

- workflows

- habits

- archives

- automation systems

- coordination logic

High switching cost should reduce score weighting unless the operational benefit is substantial.

Long-Term Defensibility

Can the system still work:

- after workload increases?

- after team growth?

- after pricing changes?

- after platform changes?

Short-term convenience often creates long-term instability.

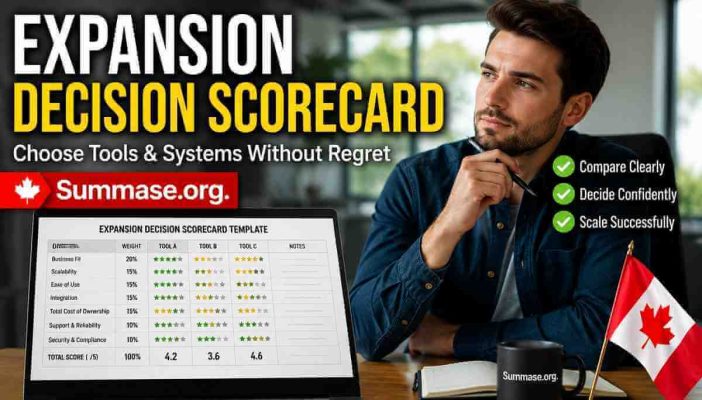

A Practical Decision Scorecard Template

Below is a simplified operational model.

| Criteria | Weight | Tool A | Tool B |

|---|---|---|---|

| Ease of Use | 25% | 8 | 6 |

| Integration Compatibility | 20% | 7 | 9 |

| Maintenance Overhead | 20% | 9 | 5 |

| Export & Backup Flexibility | 15% | 8 | 4 |

| Long-Term Scalability | 20% | 7 | 8 |

Final evaluation should reflect weighted operational value — not emotional preference.

How to Assign Weights Properly

The biggest mistake in scorecards is treating every factor equally.

Not all criteria matter equally.

For example:

A solo operator may prioritize:

- simplicity

- low maintenance

- fast execution

A distributed team may prioritize:

- collaboration

- permissions

- documentation structure

The scorecard should reflect:

operational priorities, not generic software rankings.

Hidden Costs Most People Ignore

Context Switching Cost

Every additional tool creates:

- more notifications

- more interfaces

- more mental transitions

This is why reducing tools can improve productivity even without adding automation.

Related implementation guide:

👉 [Context Switching Control: Batching, Focus Blocks, and WIP Limits]

Subscription Accumulation

Small recurring costs compound quietly over time.

This becomes especially important in:

- USD-based SaaS ecosystems

- cross-border billing environments

- multi-tool stacks

For regional implementation examples:

👉 Canada: Subscription-Heavy Productivity Systems & Practical Tool Decisions

Export Dependency

A tool that traps data creates operational risk.

Before adopting any platform, evaluate:

- export formats

- backup options

- migration difficulty

- API accessibility

Related framework:

👉 [Backup & Export Plans: Avoiding Lock-In With Productivity Tools]

When a Tool Should Be Rejected Immediately

Some tools should fail evaluation before detailed scoring begins.

Reject tools when:

- exports are restricted

- pricing structure is unstable

- workflows require excessive maintenance

- the system increases fragmentation

- collaboration logic is unclear

- onboarding complexity exceeds operational benefit

Not every tool deserves deep evaluation.

Real-World Example: Choosing a Task Management System

Imagine comparing:

- a highly customizable platform

- a simpler operational tool

The customizable system may initially score higher in flexibility.

But after weighting:

- maintenance burden

- onboarding time

- workflow consistency

- execution speed

…the simpler system may produce better long-term results.

Operational stability often beats theoretical flexibility.

Common Mistakes That Destroy Scorecard Quality

Scoring Based on Excitement

New interfaces create emotional bias.

A scorecard exists specifically to reduce this problem.

Ignoring Maintenance Cost

A powerful tool that requires constant adjustment creates long-term friction.

Overvaluing Features

More features do not automatically improve execution quality.

In many cases:

- feature overload

- notification density

- excessive customization

actually reduce consistency.

No Review Process

Operational systems change over time.

A tool decision should be reviewed periodically using updated constraints.

Related framework:

👉 [Weekly Review Protocol: A 20-Minute Decision Reset]

Building a More Reliable Decision Environment

A scorecard is not just a comparison template.

It is part of a broader operational discipline.

The goal is:

- fewer impulsive decisions

- clearer trade-offs

- more stable workflows

- lower execution friction

This aligns directly with the broader:

👉 Decision Efficiency System: A Practical Operating Model

where recurring decisions are converted into structured logic instead of repeated mental effort.

FAQ

Should every tool decision use a scorecard?

No.

Simple decisions do not require heavy evaluation.

Scorecards are most useful when:

- switching cost is high

- subscriptions are recurring

- workflows are affected

- collaboration systems are involved

What is the ideal number of criteria?

Usually between 4–7 criteria.

Too few creates shallow evaluation.

Too many creates unnecessary complexity.

Can scorecards work for personal decisions too?

Yes.

The same framework can evaluate:

- software

- workflows

- automation systems

- operational processes

- collaboration structures

The principle remains the same:

reduce emotional decision-making through structured comparison.

Smarter Tool Decisions Create Better Systems

Most workflow problems are not caused by bad intentions.

They are caused by unstable operational decisions repeated over time.

A decision scorecard helps create:

- clearer evaluation logic

- lower switching risk

- stronger execution consistency

- more sustainable productivity infrastructure

Before adopting another tool, pause the comparison cycle long enough to evaluate the operational consequences properly.

Better systems usually begin with better decisions — not more software.